- Blog

- Partynextdoor 3 album download free

- Download gameboy emulator for pc

- Imagej time lapse tool

- Serial number the sims 3 generations

- Download lagu upin ipin terbaru

- Estimate simple linear regression equation

- Lexmark s415 wireless setup utility download windows 10

- Pes 2021 psp iso file download

- Error code 1618 pdq

- Canon pixma mp800 driver windows 8-1

- Intel core 2 duo 2-4 ghz ebay

- How do i stop duplicate emails in outlook 365

- Good editing software for youtube paid

- What is ccleaner piriform

- Revo uninstaller pro 3-1 6 crackeado 64 bits

- Nxpowerlite desktop 7 reviews

- Iobit uninstaller 11-1 pro key

- Download sublime text - crack

- Kingsman the golden circle free online watch

- Mac freelance artist

- Santeria by sublime mp3 download

- Download google duo for macbook pro

- Adobe crack photoshop cs6

- Mac dvd drive region

- Compile latex file to pdf

- Qualcomm qpst software download update download

- Why does my spotify keep pausing on bluetooth

- Filemaker pro download database solutions

- Adjusting tpf golf drivers

- Download alter ego free

- The lox discography trinity download

- Automator mac vorlagen

- Download windows 10 iso 64 bit for free

- Titanic video game xbox one

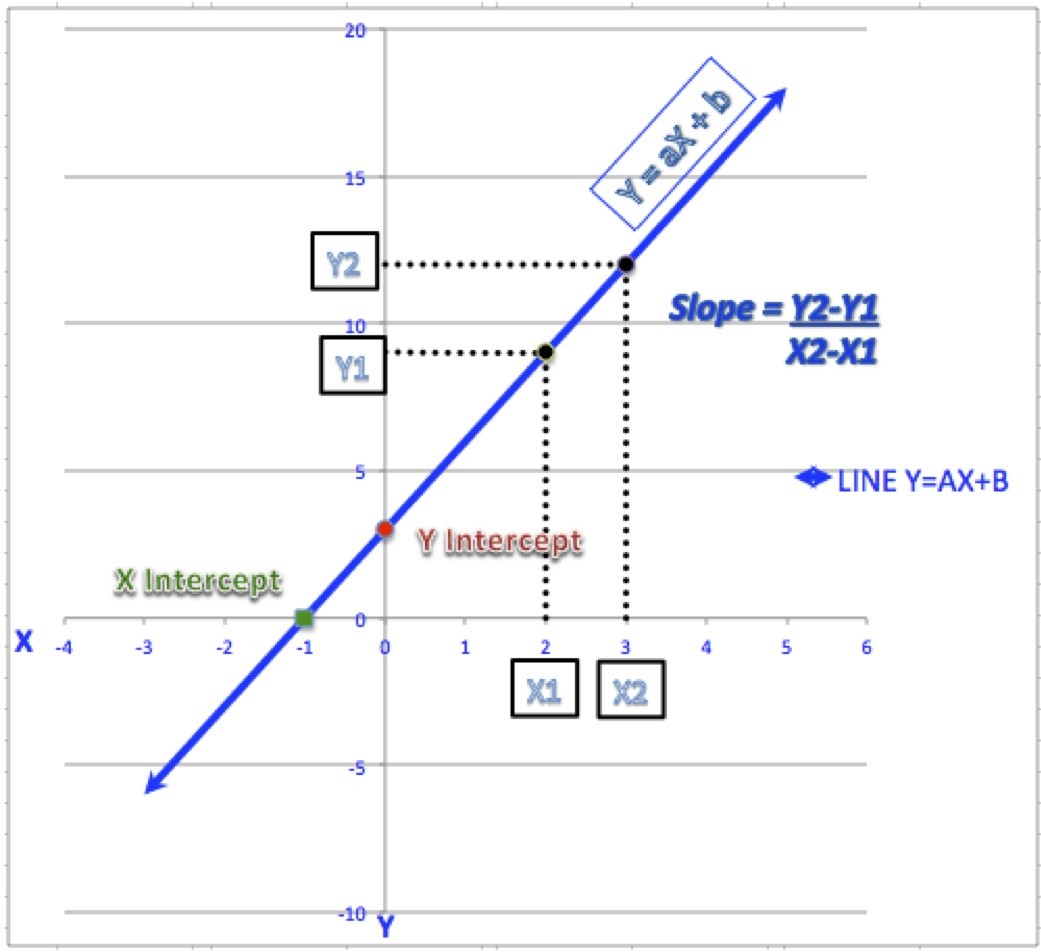

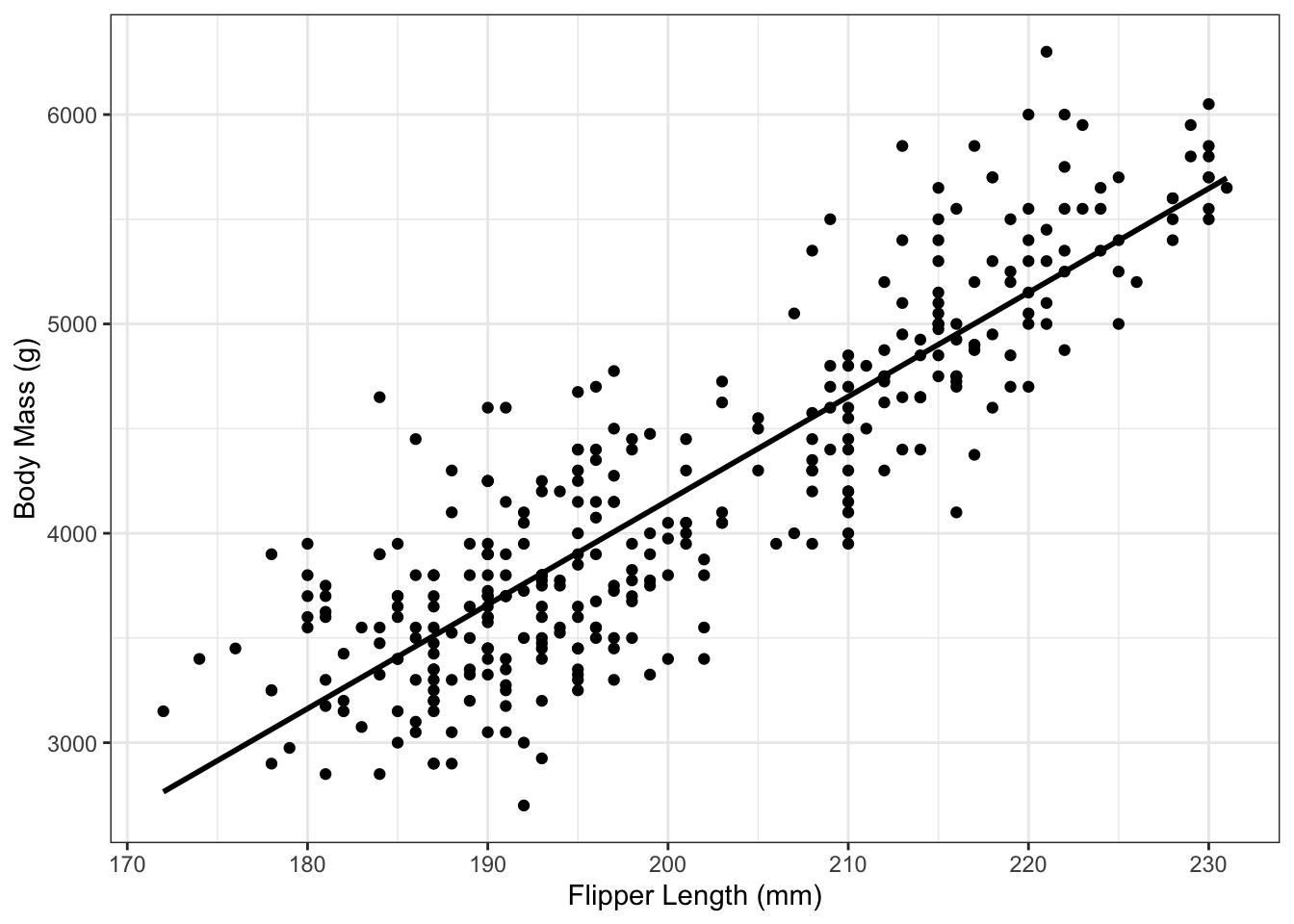

The total sums of squares of the response variable (Y) is partitioned into the variation explained by the regression and the unexplained error variation. If errors are not normally and identically distributed, then a randomization test should be used. Analysis of variance is often the preferred approach, although one can also use a t-test to test whether the slope is significantly different from zero. Providing errors are normally and identically distributed, a parametric test can be used. There are several ways the significance of a regression can be tested. a is the estimate of the Y intercept (α ) (the value of Y where X=0).n is the number of bivariate observations.X and Y are the individual observations,.b is the estimate of the slope coefficient (β ),.The parameters of the regression model are estimated from the data using ordinary least squares. Errors on the response variable are assumed to be independent and identically and normally distributed. If there is substantial measurement error on X, and the values of the estimated parameters are of interest, then errors-in-variables regression should be used. The model is still valid if X is random (as is more commonly the case), but only if X is measured without error. In the traditional regression model, values of X-variable are assumed to be fixed by the experimenter. Where β 0 is the y intercept, β 1 is the slope of the line, and ε is a random error term. Where α is the y intercept (the value of Y where X = 0), β is the slope of the line, and ε is a random error term. This is in contrast to correlation where there is no distinction between Y and X in terms of which is an explanatory variable and which a In classical (or asymmetric ) regression one variable (Y) is called the response or dependent variable, and the other (X) is called the explanatory or independent variable. Online appendix.Simple linear regression provides a means to model a straight line relationship between two variables. "Linear regression - Maximum Likelihood Estimation", Lectures on probability theory and mathematical statistics. Learn how toĭerive the estimators of the parameters of the following distributions and StatLect has several pages on maximum likelihood estimation.

This means that the probability distribution of the vector of parameter Hessian, that is, the matrix of second derivatives, can be written as a blockĪ consequence, the asymptotic covariance matrix Thus, the maximum likelihood estimators are:įor the regression coefficients, the usual OLS estimator Īsymptotically normal with asymptotic mean equal The system of first order conditions is solved The partial derivative of the log-likelihood with respect to The first of the two equations is satisfied if That is, the vector of the partial derivatives of the log-likelihood with Indicates the gradient calculated with respect to Transformations of normal random variables, the dependent variable Moreover, they all have a normal distribution with mean The assumption that the covariance matrix of

Has a multivariate normal distribution conditionalįurthermore, it is assumed that the matrix of regressors Vector of observations of the dependent variable is denoted by The regression equations can be written in matrix form

Vector of regression coefficients to be estimated and The objective is to estimate the parameters of the linear regression